Directions Health Services has reported that Australian drug checking organizations are facing challenges with Meta, the parent company of Facebook and Instagram, which has been removing their posts aimed at warning the public about harmful substances.

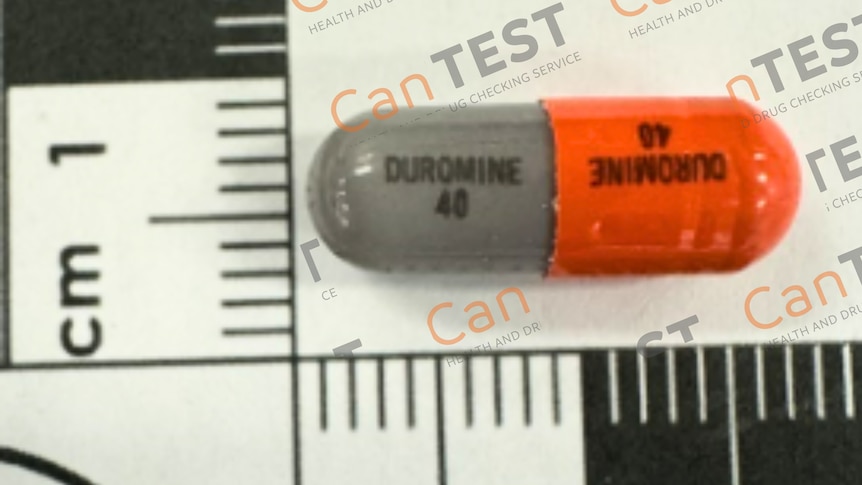

Groups such as CanTEST in Canberra utilize social media to share critical health alerts regarding dangerous drug combinations that may pose risks to the community. These organizations are now urging Meta to revise its policies on educational content related to illegal drugs and are seeking intervention from the e-Safety Commissioner.

Public health advocates emphasize that the automatic censorship of educational posts on social media could endanger lives, as it limits the dissemination of vital information about drug safety. The Australian Injecting and Illicit Drug Users League (AIVL) has highlighted the removal of numerous “critical alerts,” which hampers their ability to communicate essential information to those in need.

Several organizations, including Pill Testing Australia, CanTEST, and New Zealand’s KnowYourStuffNZ, have experienced the removal of posts and the suspension of their accounts. In some instances, AIVL reported that entire pages and personal accounts have been permanently deleted.

AIVL has indicated that Meta’s automated content moderation tools are erroneously marking these health alerts on Facebook and Instagram as endorsements of drug use.

According to John Gobeil, the chief executive of AIVL, “These services depend on social media to inform the public about what substances are currently available and how to stay safe. Blocking these messages prevents people from knowing about the service and limits their ability to make informed choices.”

David Caldicott, the clinical lead at CanTEST and Pill Testing Australia, who is also an emergency physician, noted that posts may be “withheld, altered, or censored due to their content about drugs.” He remarked that while the information is health-related, the shift from human moderation to AI-driven systems has resulted in unnecessary content removal.

Dr. Caldicott pointed out that Meta, being an American company, may be influenced by a different cultural perspective on drug education. CanTEST’s mission is to inform drug users about the risks associated with dangerous substances and encourage them to have their drugs tested prior to use, ultimately aiming to educate rather than punish and prevent drug-related fatalities.

He expressed concern that there seems to be a trend of stifling discussions on illicit drugs, even when they pertain to health. While artificial intelligence has exacerbated the issue, Dr. Caldicott noted that the challenge has existed since the beginning of social media’s rise.

He argued that social media platforms possess the technical capability to manage this content appropriately, but the ethical oversight has not advanced at the same pace. “It’s time for them to mature and align with modern standards of information dissemination for young audiences,” he stated.

Dr. Caldicott criticized the actions of social media companies as “unacceptable,” particularly given that younger populations often rely on these platforms for news and information. “We are providing crucial health messages to young individuals who need them, yet unqualified individuals are obstructing that communication,” he added.

He cautioned that without access to information about hazardous drugs, individuals might consume them without proper knowledge, leading to potentially fatal outcomes.

Dr. Caldicott is urging social media firms to commit to collaborating with healthcare professionals to ensure timely dissemination of accurate information. The affected organizations are also calling on the e-Safety Commissioner to take action to “compel” Meta to restore all accounts and content that were removed or suspended for alleged violations of drug-related community standards.

In response to this situation, CanTEST has launched an app named Night Coach, designed to promote awareness and information without relying on social media platforms.

Stephanie Stephens from Directions Health Services, which operates CanTEST, mentioned that they are actively seeking solutions to the challenges encountered with social media. “We are trying to engage with Meta to resolve this situation, but so far, our efforts have been unproductive,” she noted.

Stephens emphasized that they continue to utilize various platforms, including their website, to communicate information, although the reach of social media is invaluable. “We are exploring ways to better address this issue,” she added.

She highlighted that CanTEST has garnered a significant following on social media, with tens of thousands of users, and recounted an incident in December when a post regarding a dangerous opioid was removed just prior to the Split Milk festival in Canberra. She encouraged individuals seeking alerts and information to visit the CanTEST website directly.

In response to inquiries from ABC News, Meta declined to provide any comments. The ABC has also reached out to the e-Safety Commissioner for a statement.